23年云计算全国职业技能大赛-私有云

23年云计算全国职业技能大赛国赛私有云部分解析-仅供参考

任务 1 私有云服务搭建[5 分]

1.1.1 基础环境配置[0.2 分]

1.控制节点主机名为 controller,设置计算节点主机名为 compute;

2.hosts 文件将 IP 地址映射为主机名。

使用提供的用户名密码,登录提供的 OpenStack 私有云平台,在当前租户下,

使用 CentOS7.9镜像,创建两台云主机,云主机类型使用 4vCPU/12G/100G_50G

类型。当前租户下默认存在一张网卡,自行创建第二张网卡并连接至 controller

和 compute 节点(第二张网卡的网段为 10.10.X.0/24,X 为工位号,不需要创建

路由)。自行检查安全组策略,以确保网络正常通信与 ssh连接,然后按以下要

求配置服务器:

(1)设置控制节点主机名为 controller,设置计算节点主机名为 compute;

(2)修改 hosts 文件将 IP 地址映射为主机名;

[root@controller ~]# hostnamectl set-hostname controller

[root@compute ~]# hostnamectl set-hostname compute

[root@controller ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.100.10 controller

192.168.100.20 compute

[root@controller ~]#

1.查看控制节点名字为 controller,查看 hosts 文件中有正确的主机名和 IP 映射计 0.1 分

2.控制节点正确使用两块网卡计 0.1 分

1.1.2 Yum 源配置[0.2 分]

使用提供的 http 服务地址,分别设置 controller 节点和 compute 节点的 yum

源文件 http.repo。

使用提供的 http 服务地址,在 http 服务下,存在 centos7.9 和 iaas 的网络

yum 源,使用该 http 源作为安装 iaas 平台的网络源。分别设置 controller 节点和

compute 节点的 yum 源文件 http.repo。

[root@controller ~]# cat /etc/yum.repos.d/local.repo

[centos]

name=centos

baseurl=file:///opt/centos #比赛使用的是http远程yum源

gpgcheck=0

enabled=1

[iaas]

name=iaas

baseurl=file:///opt/iaas/iaas-repo

gpgcheck=0

enabled=1

[root@controller ~]#

1.查看/etc/yum.repos.d/http.repo 文件,有正确的 baseurl 路径,计 0.2 分

1.1.3 配置无秘钥 ssh[0.2 分]

配置 controller 节点可以无秘钥访问 compute 节点。

配置 controller节点可以无秘钥访问 compute节点,配置完成后,尝试 ssh连

接 compute 节点的 hostname 进行测试。

[root@controller ~]# ssh compute

Last login: Sun Sep 18 21:40:30 2023 from 192.168.100.1

################################

# Welcome to OpenStack #

################################

[root@compute ~]# ssh controller

Last login: Sun Sep 18 21:39:59 2023 from 192.168.100.1

################################

# Welcome to OpenStack #

################################

[root@controller ~]#

1.查看控制节点允许计算节点无秘钥登录计 0.2 分

1.1.4 基础安装[0.2 分]

在控制节点和计算节点上分别安装 openstack-iaas 软件包。

在控制节点和计算节点上分别安装 openstack-iaas 软件包,根据表 2 配置两个节点脚本文件中的基本变量(配置脚本文件为/etc/openstack/openrc.sh,即密码默认000000)。

[root@controller ~]# yum install -y openstack-iaas

Loaded plugins: fastestmirror

Determining fastest mirrors

centos | 3.6 kB 00:00:00

iaas | 2.9 kB 00:00:00

Package openstack-iaas-2.0.1-2.noarch already installed and latest version

Nothing to do

[root@controller ~]# sed -i 's/^.//'g /etc/openstack/openrc.sh

#去点第一行的注释符

[root@controller ~]# sed -i 's/PASS=/PASS=000000/'g /etc/openstack/openrc.sh

#替换PASS=为PASS=000000

[root@controller ~]# cat /etc/openstack/openrc.sh

#--------------------system Config--------------------##

#Controller Server Manager IP. example:x.x.x.x

HOST_IP=192.168.100.10

#Controller HOST Password. example:000000

HOST_PASS=000000

#Controller Server hostname. example:controller

HOST_NAME=controller

#Compute Node Manager IP. example:x.x.x.x

HOST_IP_NODE=192.168.100.20

#Compute HOST Password. example:000000

HOST_PASS_NODE=000000

#Compute Node hostname. example:compute

HOST_NAME_NODE=compute

#--------------------Chrony Config-------------------##

#Controller network segment IP. example:x.x.0.0/16(x.x.x.0/24)

network_segment_IP=192.168.100.0/24

#--------------------Rabbit Config ------------------##

#user for rabbit. example:openstack

RABBIT_USER=openstack

#Password for rabbit user .example:000000

RABBIT_PASS=000000

#--------------------MySQL Config---------------------##

#Password for MySQL root user . exmaple:000000

DB_PASS=000000

#--------------------Keystone Config------------------##

#Password for Keystore admin user. exmaple:000000

DOMAIN_NAME=huhy

ADMIN_PASS=Root@123

DEMO_PASS=Root@123

#Password for Mysql keystore user. exmaple:000000

KEYSTONE_DBPASS=000000

#--------------------Glance Config--------------------##

#Password for Mysql glance user. exmaple:000000

GLANCE_DBPASS=000000

#Password for Keystore glance user. exmaple:000000

GLANCE_PASS=000000

#--------------------Placement Config----------------------##

#Password for Mysql placement user. exmaple:000000

PLACEMENT_DBPASS=000000

#Password for Keystore placement user. exmaple:000000

PLACEMENT_PASS=000000

#--------------------Nova Config----------------------##

#Password for Mysql nova user. exmaple:000000

NOVA_DBPASS=000000

#Password for Keystore nova user. exmaple:000000

NOVA_PASS=000000

#--------------------Neutron Config-------------------##

#Password for Mysql neutron user. exmaple:000000

NEUTRON_DBPASS=000000

#Password for Keystore neutron user. exmaple:000000

NEUTRON_PASS=000000

#metadata secret for neutron. exmaple:000000

METADATA_SECRET=000000

#External Network Interface. example:eth1

INTERFACE_NAME=ens37

#External Network The Physical Adapter. example:provider

Physical_NAME=provider

#First Vlan ID in VLAN RANGE for VLAN Network. exmaple:101

minvlan=1

#Last Vlan ID in VLAN RANGE for VLAN Network. example:200

maxvlan=1000

#--------------------Cinder Config--------------------##

#Password for Mysql cinder user. exmaple:000000

CINDER_DBPASS=000000

#Password for Keystore cinder user. exmaple:000000

CINDER_PASS=000000

#Cinder Block Disk. example:md126p3

BLOCK_DISK=sdb1

#--------------------Swift Config---------------------##

#Password for Keystore swift user. exmaple:000000

SWIFT_PASS=000000

#The NODE Object Disk for Swift. example:md126p4.

OBJECT_DISK=sdb2

#The NODE IP for Swift Storage Network. example:x.x.x.x.

STORAGE_LOCAL_NET_IP=192.168.100.20

#--------------------Trove Config----------------------##

#Password for Mysql trove user. exmaple:000000

TROVE_DBPASS=000000

#Password for Keystore trove user. exmaple:000000

TROVE_PASS=000000

#--------------------Heat Config----------------------##

#Password for Mysql heat user. exmaple:000000

HEAT_DBPASS=000000

#Password for Keystore heat user. exmaple:000000

HEAT_PASS=000000

#--------------------Ceilometer Config----------------##

#Password for Gnocchi ceilometer user. exmaple:000000

CEILOMETER_DBPASS=000000

#Password for Keystore ceilometer user. exmaple:000000

CEILOMETER_PASS=000000

#--------------------AODH Config----------------##

#Password for Mysql AODH user. exmaple:000000

AODH_DBPASS=000000

#Password for Keystore AODH user. exmaple:000000

AODH_PASS=000000

#--------------------ZUN Config----------------##

#Password for Mysql ZUN user. exmaple:000000

ZUN_DBPASS=000000

#Password for Keystore ZUN user. exmaple:000000

ZUN_PASS=000000

#Password for Keystore KURYR user. exmaple:000000

KURYR_PASS=000000

#--------------------OCTAVIA Config----------------##

#Password for Mysql OCTAVIA user. exmaple:000000

OCTAVIA_DBPASS=000000

#Password for Keystore OCTAVIA user. exmaple:000000

OCTAVIA_PASS=000000

#--------------------Manila Config----------------##

#Password for Mysql Manila user. exmaple:000000

MANILA_DBPASS=000000

#Password for Keystore Manila user. exmaple:000000

MANILA_PASS=000000

#The NODE Object Disk for Manila. example:md126p5.

SHARE_DISK=sdb3

#--------------------Cloudkitty Config----------------##

#Password for Mysql Cloudkitty user. exmaple:000000

CLOUDKITTY_DBPASS=000000

#Password for Keystore Cloudkitty user. exmaple:000000

CLOUDKITTY_PASS=000000

#--------------------Barbican Config----------------##

#Password for Mysql Barbican user. exmaple:000000

BARBICAN_DBPASS=000000

#Password for Keystore Barbican user. exmaple:000000

BARBICAN_PASS=000000

###############################################################

#####在vi编辑器中执行:%s/^.\{1\}// 删除每行前1个字符(#号)#####

###############################################################

[root@controller ~]#

[root@controller ~]# scp /etc/openstack/openrc.sh compute:/etc/openstack/

#传输完毕后,设置磁盘分区,分出三块!!!对应的磁盘名称写入环境变量中

1.检查环境变量文件配置正确计 0.2 分

1.1.5 数据库安装与调优[0.5 分]

在控制节点上使用安装 Mariadb、RabbitMQ 等服务。并进行相关操作。在 controller 节点上使用 iaas-install-mysql.sh 脚本安装 Mariadb、Memcached、RabbitMQ 等服务。安装服务完毕后,修改/etc/my.cnf 文件,完成下列要求:

1.设置数据库支持大小写;

2.设置数据库缓存 innodb 表的索引,数据,插入数据时的缓冲为 4G;

3.设置数据库的 log buffer 为 64MB;

4.设置数据库的 redo log 大小为 256MB;

5.设置数据库的 redo log 文件组为 2。

6.修改 Memcached 的相关配置,将内存占用大小设置为 512MB,调整最大

连接数参数为 2048;

7.调整 Memcached 的数据摘要算法(hash)为 md5;

[root@controller ~]# iaas-pre-host.sh

[root@compute ~]# iaas-pre-host.sh

#执行完这一步,一定要重新连接,刷新一下,不然rabbitmq服务会报错

[root@controller ~]# iaas-install-mysql.sh

[root@controller ~]# cat /etc/my.cnf

#

# This group is read both both by the client and the server

# use it for options that affect everything

#

[client-server]

#

# This group is read by the server

#

[mysqld]

# Disabling symbolic-links is recommended to prevent assorted security risks

symbolic-links=0

default-storage-engine = innodb

innodb_file_per_table

collation-server = utf8_general_ci

init-connect = 'SET NAMES utf8'

character-set-server = utf8

max_connections=10000

innodb_log_buffer_size = 4M

#此参数确定些日志文件所用的内存大小,以M为单位。缓冲区更大能提高性能,但意外的故障将会丢失数据。MySQL开发人员建议设置为1-8M之间

innodb_log_file_size = 32M

#此参数确定数据日志文件的大小,更大的设置可以提高性能,但也会增加恢复故障数据库所需的时间

innodb_log_files_in_group = 3

#为提高性能,MySQL可以以循环方式将日志文件写到多个文件。推荐设置为3

#1,数据库不区分大小写,其中 0:区分大小写,1:不区分大小写

lower_case_table_names =1

#2,设置innodb的数据缓冲为4G

innodb_buffer_pool_size = 4G

#3,传输数据包的大小值

max_allowed_packet = 30M

#

# include all files from the config directory

#

!includedir /etc/my.cnf.d

[root@controller ~]#

[root@controller ~]# cat /etc/sysconfig/memcached

PORT="11211"

USER="memcached"

MAXCONN="2048"

CACHESIZE="512"

OPTIONS="-l 127.0.0.1,::1,controller"

hash_algorithm=md5

1.检查数据库和 memcached 配置正确计 0.5 分

1.1.6 Keystone 服务安装与使用[0.5 分]

在控制节点上安装 Keystone 服务并创建用户。

在 controller 节点上使用 iaas-install-keystone.sh 脚本安装 Keystone 服务。然后创建OpenStack域210Demo,其中包含Engineering与Production项目,

在域 210Demo 中创建组 Devops,其中需包含以下用户:

1.Robert 用户是 Engineering 项目的用户(member)与管理员(admin),email 地址为:Robert@lab.example.com。

2.George 用户是 Engineering 项目的用户(member),email 地址为:George@lab.example.com。

3.William 用户是 Production 项目的用户(member)与管理员(admin),email 地址为:William@lab.example.com。

4.John 用户是 Production 项目的用户( member ) , email 地址为:John@lab.example.com。

创建项目210Demo

[root@controller ~]# source /etc/keystone/admin-openrc.sh

[root@controller ~]# openstack domain create 210Demo

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | |

| enabled | True |

| id | f962300124244efa9527a1b335522a4a |

| name | 210Demo |

| options | {} |

| tags | [] |

+-------------+----------------------------------+

[root@controller ~]#

创建Engineering和Production项目

[root@controller ~]# openstack project create --domain 210Demo Engineering

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | |

| domain_id | f962300124244efa9527a1b335522a4a |

| enabled | True |

| id | d2b59861f6df43998ea04558d4632b61 |

| is_domain | False |

| name | Engineering |

| options | {} |

| parent_id | f962300124244efa9527a1b335522a4a |

| tags | [] |

+-------------+----------------------------------+

[root@controller ~]# openstack project create --domain 210Demo Production

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | |

| domain_id | f962300124244efa9527a1b335522a4a |

| enabled | True |

| id | 1bf6d32e545b409197b89d7402ff7dec |

| is_domain | False |

| name | Production |

| options | {} |

| parent_id | f962300124244efa9527a1b335522a4a |

| tags | [] |

+-------------+----------------------------------+

[root@controller ~]#

创建组Devops

[root@controller ~]# openstack group create --domain 210Demo Devops

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | |

| domain_id | f962300124244efa9527a1b335522a4a |

| id | 01312098b02c4fb8bf1d8e8a82b0334e |

| name | Devops |

+-------------+----------------------------------+

[root@controller ~]#

创建用户Robert并分配到组Devops,并将其添加到Engineering项目中的成员和管理员

[root@controller ~]# openstack user create --domain 210Demo --password 000000 --email Robert@lab.example.com Robert

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | f962300124244efa9527a1b335522a4a |

| email | Robert@lab.example.com |

| enabled | True |

| id | 4045810cf03a4780acf2c714052e533c |

| name | Robert |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@controller ~]# openstack group add user Devops Robert

[root@controller ~]# openstack role add --project Engineering --user Robert member

[root@controller ~]# openstack role add --project Engineering --user Robert admin

创建用户George分配到组Devops,并将其添加到Engineering项目中的成员

[root@controller ~]# openstack user create --domain 210Demo --password 000000 --email George@lab.example.com George

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | f962300124244efa9527a1b335522a4a |

| email | George@lab.example.com |

| enabled | True |

| id | 47b811b2ff374ac38547464413f532dc |

| name | George |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@controller ~]# openstack group add user Devops George

[root@controller ~]# openstack role add --project Engineering --user George member

[root@controller ~]#

创建用户William分配到组Devops,并将其添加到Production项目中的成员和管理员

[root@controller ~]# openstack user create --domain 210Demo --password 000000 --email William@lab.example.com William

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | f962300124244efa9527a1b335522a4a |

| email | William@lab.example.com |

| enabled | True |

| id | 9b83aaaeef354609ba882728921ce757 |

| name | William |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@controller ~]# openstack group add user Devops William

[root@controller ~]# openstack role add --project Production --user William member

[root@controller ~]# openstack role add --project Production --user William admin

[root@controller ~]#

创建用户John并分配到组Devops,并将John用户分配为Production项目的成员

[root@controller ~]# openstack user create --domain 210Demo --password 000000 --email John@lab.example.com John

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | f962300124244efa9527a1b335522a4a |

| email | John@lab.example.com |

| enabled | True |

| id | 419aa40221cb49649572468c65a6ff59 |

| name | John |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@controller ~]# openstack group add user Devops John

[root@controller ~]# openstack role add --project Production --user John member

[root@controller ~]#

1.检查平台中的 210Demo 域中是否有题目所需的用户与项目,正确计 0.5 分

1.1.7 Glance 安装与使用[0.5 分]

在控制节点上安装 Glance 服务。上传镜像至平台,并设置镜像启动的要求参数。在 controller 节点上使用 iaas-install-glance.sh 脚本安装 glance 服务。然后使用提供的coreos_production_pxe.vmlinuz 镜像(该镜像为 Ironic Deploy 镜像,是一个 AWS 内核格式的镜像,在 OpenStack Ironic 裸金属服务时需要用到)上传到 OpenStack 平台中,命名为 deploy-vmlinuz。

[root@controller ~]# openstack image create --disk-format aki --container-format aki --file coreos_production_pxe.vmlinuz deploy-vmlinuz

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| checksum | 69ca72c134cac0def0e6a42b4f0fba67 |

| container_format | aki |

| created_at | 2023-10-08T03:18:25Z |

| disk_format | aki |

| file | /v2/images/29e830bc-e7ac-4f65-94f5-7da4ade21949/file |

| id | 29e830bc-e7ac-4f65-94f5-7da4ade21949 |

| min_disk | 0 |

| min_ram | 0 |

| name | deploy-vmlinuz |

| owner | 8bde12ff804e42498661b7454994c446 |

| properties | os_hash_algo='sha512', os_hash_value='7241aeaf86a4f12dab2fccdc4b8ff592f16d13b37e8deda539c97798cdda47623002a4bddd0a89b5d17e6c7bc2eb9e81f4a031699175c11e73dc821030dfc7f4', os_hidden='False' |

| protected | False

|

| schema | /v2/schemas/image

|

| size | 43288240

|

| status | active

|

| tags |

|

| updated_at | 2023-10-08T03:18:26Z

|

| virtual_size | None

|

| visibility | shared

|

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

[root@controller ~]#

1.检查 glance 服务安装正确计 0.1 分

2.检查 deploy-vmlinuz 镜像内核格式正确计 0.4 分

1.1.8 Nova 安装与优化[0.5 分]

在控制节点和计算节点上分别安装 Nova 服务。安装完成后,完成 Nova 相关配置。在 controller 节点和 compute 节点上分别使用 iaas-install-placement.sh 脚本、iaas-install-nova -controller.sh 脚本、iaas-install-nova-compute.sh 脚本安装 Nova 服务。在 OpenStack 中,修改相关配置文件,修改调度器规则采用缓存调度器,缓存主机信息,提升调度时间。

[root@controller ~]# vi /etc/nova/nova.conf

driver=simple_scheduler

1.检查 nova 服务调度器配置正确计 0.5 分

1.1.9 Neutron 安装[0.2 分]

在控制和计算节点上正确安装 Neutron 服务。使用提供的脚本 iaas-install-neutron-controller.sh 和 iaas-install-neutroncompute.sh,在 controller 和 compute 节点上安装 neutron 服务。

[root@controller ~]# iaas-install-neutron-controller.sh

[root@compute~]# iaas-install-neutron-compute.sh

1.检查 neutron 服务安装正确计 0.1 分

2.检查 neutron 服务的 linuxbridge 网桥服务启动正确计 0.1 分

1.1.10 Dashboard 安装[0.5 分]

在控制节点上安装 Dashboard 服务。安装完成后,将 Dashboard 中的 Django数据修改为存储在文件中。在controller节点上使用iaas-install-dashboad.sh脚本安装Dashboard服务。安装完成后,修改相关配置文件,完成下列两个操作:

1.使得登录 Dashboard 平台的时候不需要输入域名;

2.将 Dashboard 中的 Django 数据修改为存储在文件中。

[root@controller ~]# iaas-install-dashboard.sh

[root@controller ~]# vim /etc/openstack-dashboard/local_settings

SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

改为

SESSION_ENGINE = 'django.contrib.sessions.backends.file'

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

改为

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = False

1.检查 Dashboard 服务中 Djingo 数据修改为存储在文件中配置正确计 0.2 分

2.检查 Dashboard 服务中登陆平台不输入域名配置正确计 0.3 分

1.1.11 Swift 安装[0.5 分]

在控制节点和计算节点上分别安装 Swift 服务。安装完成后,将 cirros 镜像进行分片存储。在控制节点和计算节点上分别使用 iaas-install-swift-controller.sh 和 iaasinstall-swift-compute.sh 脚本安装Swift 服务。安装完成后,使用命令创建一个名叫 examcontainer 的 容 器 , 将 cirros-0.3.4-x86_64-disk.img 镜像上传到examcontainer 容器中,并设置分段存放,每一段大小为 10M。

[root@controller ~]# iaas-install-swift-controller.sh

[root@compute ~]# iaas-install-swift-compute.sh

[root@controller ~]# ls

anaconda-ks.cfg cirros-0.3.4-x86_64-disk.img logininfo.txt

[root@controller ~]# swift post examcontainers

[root@controller ~]# swift upload examcontaiers -S 10000000 cirros-0.3.4-x86_64-disk.img

cirros-0.3.4-x86_64-disk.img segment 1

cirros-0.3.4-x86_64-disk.img segment 0

cirros-0.3.4-x86_64-disk.img

[root@controller ~]# du -sh cirros-0.3.4-x86_64-disk.img

13M cirros-0.3.4-x86_64-disk.img

[root@controller ~]#

#因为镜像就13M,所有存储为两段

1.检查 swift 服务安装正确计 0.3 分

2.分段上传 cirros 镜像正确计 0.2 分

1.1.12 Cinder 创建硬盘[0.5 分]

在控制节点和计算节点分别安装 Cinder 服务,请在计算节点,对块存储进行扩容操作。在控制节点和计算节点分别使用 iaas-install-cinder-controller.sh、iaas-installcinder-compute.sh 脚本安装Cinder 服务,请在计算节点,对块存储进行扩容操作,即在计算节点再分出一个 5G 的分区,加入到 cinder 块存储的后端存储中去。

[root@controller ~]# iaas-install-cinder-controller.sh

[root@compute ~]# iaas-install-cinder-compute.sh

[root@compute ~]# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 200G 0 disk

├─sda1 8:1 0 1G 0 part /boot

└─sda2 8:2 0 19G 0 part

├─centos-root 253:0 0 17G 0 lvm /

└─centos-swap 253:1 0 2G 0 lvm [SWAP]

sdb 8:16 0 50G 0 disk

├─sdb1 8:17 0 10G 0 part

│ ├─cinder--volumes-cinder--volumes--pool_tmeta 253:2 0 12M 0 lvm

│ │ └─cinder--volumes-cinder--volumes--pool 253:4 0 9.5G 0 lvm

│ └─cinder--volumes-cinder--volumes--pool_tdata 253:3 0 9.5G 0 lvm

│ └─cinder--volumes-cinder--volumes--pool 253:4 0 9.5G 0 lvm

├─sdb2 8:18 0 10G 0 part /swift/node/sdb2

├─sdb3 8:19 0 10G 0 part

└─sdb4 8:20 0 5G 0 part

sr0 11:0 1 4.4G 0 rom

[root@compute ~]#

[root@compute ~]# vgdisplay

--- Volume group ---

VG Name cinder-volumes

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 4

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 1

Open LV 0

Max PV 0

Cur PV 1

Act PV 1

VG Size <10.00 GiB

PE Size 4.00 MiB

Total PE 2559

Alloc PE / Size 2438 / 9.52 GiB

Free PE / Size 121 / 484.00 MiB

VG UUID 3k0yKg-iQB2-b2CM-a0z2-2ddJ-cdG3-8WpyrG

--- Volume group ---

VG Name centos

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 3

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 1

Act PV 1

VG Size <19.00 GiB

PE Size 4.00 MiB

Total PE 4863

Alloc PE / Size 4863 / <19.00 GiB

Free PE / Size 0 / 0

VG UUID acAXNK-eqKm-qs9b-ly3T-R3Sh-8qyv-nELNWv

[root@compute ~]# vgextend cinder-volumes /dev/sdb4

Volume group "cinder-volumes" successfully extended

[root@compute ~]# vgdisplay

--- Volume group ---

VG Name cinder-volumes

System ID

Format lvm2

Metadata Areas 2

Metadata Sequence No 5

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 1

Open LV 0

Max PV 0

Cur PV 2

Act PV 2

VG Size 14.99 GiB

PE Size 4.00 MiB

Total PE 3838

Alloc PE / Size 2438 / 9.52 GiB

Free PE / Size 1400 / <5.47 GiB

VG UUID 3k0yKg-iQB2-b2CM-a0z2-2ddJ-cdG3-8WpyrG

--- Volume group ---

VG Name centos

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 3

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 1

Act PV 1

VG Size <19.00 GiB

PE Size 4.00 MiB

Total PE 4863

Alloc PE / Size 4863 / <19.00 GiB

Free PE / Size 0 / 0

VG UUID acAXNK-eqKm-qs9b-ly3T-R3Sh-8qyv-nELNWv

[root@compute ~]#

1.检查 cinder 后端存储扩容成功计 0.5 分

1.1.13 配置主机禁 ping [0.5 分]

修改 controller 节点的相关配置文件,配置 controller 节点禁止其他节点可以ping 它。

vi /etc/sysctl.conf

# 0表示: 允许, 1: 表示不允许

net.ipv4.icmp_echo_ignore_all = 1

sysctl -p

1.检查系统配置文件正确计 0.5 分

任务 2 私有云服务运维[15 分]

1.2.1 Heat 编排-创建用户[1 分]

编写 Heat 模板 create_user.yaml,创建名为 heat-user 的用户。使 用 自 己 搭 建 的 OpenStack 私 有 云 平 台 , 使 用 heat 编写摸板(heat_template_version: 2016-04-08)创建名为”chinaskills”的 domain,在此 domain下创建名为 beijing_group 的租户,在此租户下创建名为 cloud 的用户,将此文件命名及保存在/root/user_create.yml。(竞赛系统会执行 yaml 文件,请确保执行的环境)

[root@controller ~]# cat create_user.yaml

heat_template_version: 2016-04-08

resources:

user:

type: OS::Keystone::User

properties:

name: heat-user

password: "123456"

domain: default

default_project: admin

roles: [{"role": admin, "project": admin}]

[root@controller ~]# openstack stack create -t create_user.yaml test-user

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| id | e0249dae-19d3-48fd-b3a8-fa57af083765 |

| stack_name | test-user |

| description | No description |

| creation_time | 2023-10-05T09:06:44Z |

| updated_time | None |

| stack_status | CREATE_IN_PROGRESS |

| stack_status_reason | Stack CREATE started |

+---------------------+--------------------------------------+

[root@controller ~]# openstack user list

+----------------------------------+-------------------+

| ID | Name |

+----------------------------------+-------------------+

| 39e9cdd82c474808940e31ade25c2598 | admin |

| 86b5d5d442134ff9a0c61f297949fb2a | demo |

| be0000a08553469da89c9eec73adc6ae | glance |

| 6fbfc10d83cd42e7b1e2fa2437222898 | placement |

| 2f8a154305444599a35743b5f6915a6a | nova |

| 5f355d0887694a4e8108b77fb2ba978c | neutron |

| af2a87d4b9f74091a0e59d73a12c1ea0 | heat |

| 199d3d04353544769bf080c49103b6aa | heat_domain_admin |

| 8f8937fa4cac4026b2be760fa184ffec | heat-user |

+----------------------------------+-------------------+

[root@controller ~]#

[root@controller ~]# cat user_create.yml

heat_template_version: 2016-04-08

resources:

chinaskills:

type: OS::Keystone::Domain

properties:

name: chinaskills

beijing_group:

type: OS::Keystone::Project

properties:

name: beijing_group

domain: { get_resource: chinaskills }

cloud_user:

type: OS::Keystone::User

properties:

name: cloud

domain: { get_resource: chinaskills }

password: 000000

default_project: { get_resource: beijing_group }

[root@controller ~]# openstack stack create -t user_create.yml test

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| id | 0ac5aaef-73e7-462d-84a9-b9ae05acbbc8 |

| stack_name | test |

| description | No description |

| creation_time | 2023-10-05T09:51:44Z |

| updated_time | None |

| stack_status | CREATE_IN_PROGRESS |

| stack_status_reason | Stack CREATE started |

+---------------------+--------------------------------------+

[root@controller ~]# openstack domain list

+----------------------------------+-------------+---------+--------------------------+

| ID | Name | Enabled | Description |

+----------------------------------+-------------+---------+--------------------------+

| 2141d34db6ab4ae09ab21ba9c9ed3f0f | heat | True | Stack projects and users |

| d1a0c1fb7520410ba583e9c8b6afc133 | chinaskills | True | |

| default | Default | True | The default domain |

+----------------------------------+-------------+---------+--------------------------+

[root@controller ~]#

[root@controller ~]# openstack project show beijing_group

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | |

| domain_id | d1a0c1fb7520410ba583e9c8b6afc133 |

| enabled | True |

| id | 4dd4e129959d4eeb879367b549a666d9 |

| is_domain | False |

| name | beijing_group |

| options | {} |

| parent_id | d1a0c1fb7520410ba583e9c8b6afc133 |

| tags | [] |

+-------------+----------------------------------+

[root@controller ~]# openstack user show cloud

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| description | |

| domain_id | d1a0c1fb7520410ba583e9c8b6afc133 |

| enabled | True |

| id | aa57e11217924cac9d9a18ffa139fe41 |

| name | cloud |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@controller ~]#

1.执行 heat 模板成功创建出域和租户以及用户正确计 1 分

1.2.2 KVM 优化[1 分]

在 OpenStack 平台上修改相关配置文件,启用-device virtio-net-pci in kvm。在自行搭建的 OpenStack 私有云平台或赛项提供的 all-in-one 平台上,修改相关配置文件,启用-device virtio-net-pci in kvm。

#修改/etc/nova/nova.conf文件添加以下参数

--libvirt_use_virtio_for_bridges=true

1.检查 nova 服务 KVM 配置正确计 1 分

1.2.3 NFS 对接 Glance 后端存储[1 分]

使用 OpenStack 私有云平台,创建一台云主机,安装 NFS 服务,然后对接Glance 后端存储。使用赛项提供的 OpenStack 私有云平台,创建一台云主机(镜像使用CentOS7.9,flavor 使用带临时磁盘 50G 的),配置该主机为 nfs 的 server 端,将该云主机中的 50G 磁盘通过/mnt/test 目录进行共(目录不存在可自行创建)。然后配置 controller 节点为 nfs 的 client 端,要求将/mnt/test 目录作为glance 后端存储的挂载目录。

[root@nfs-server ~]# mkdir /mnt/test

[root@nfs-server ~]# vi /etc/exports

/mnt/test 10.24.200.0/24(rw,no_root_squash,no_all_squash,sync,anonuid=501,anongid=501)

[root@nfs-server ~]# exportfs -r

[root@controller ~]# mount -t nfs 10.24.193.142:/mnt/test /var/lib/glance/images/

[root@controller ~]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/vda1 50G 2.7G 48G 6% /

devtmpfs 5.8G 0 5.8G 0% /dev

tmpfs 5.8G 4.0K 5.8G 1% /dev/shm

tmpfs 5.8G 49M 5.8G 1% /run

tmpfs 5.8G 0 5.8G 0% /sys/fs/cgroup

/dev/loop0 9.4G 33M 9.4G 1% /swift/node/vda5

tmpfs 1.2G 0 1.2G 0% /run/user/0

10.24.193.142:/mnt/test 20G 865M 20G 5% /var/lib/glance/images

[root@controller ~]# cd /var/lib/glance/

[root@controller glance]# chown glance:glance images/

1.检查挂载共享目录正确计 0.5 分

2.检查共享目录用户合组正确计 0.5 分

1.2.4 Redis 主从[1 分]

使用赛项提供的 OpenStack 私有云平台,创建两台云主机,配置为 redis 的主从架构。使用赛项提供的 OpenStack 私有云平台,申请两台 CentOS7.9 系统的云主机,使用提供的 http 源,在两个节点安装 redis 服务并启动,配置 redis 的访问需要密码,密码设置为 123456。然后将这两个 redis 节点配置为 redis 的主从架构。

参考前年国赛

1.检查 redis 主从集群部署正确计 1 分

1.2.5 Linux 系统调优-脏数据回写[1 分]

修改系统配置文件,要求将回写磁盘的时间临时调整为 60 秒。Linux 系统内存中会存在脏数据,一般系统默认脏数据 30 秒后会回写磁盘,修改系统配置文件,要求将回写磁盘的时间临时调整为 60秒。完成后提交 controller 节点的用户名、密码和 IP 地址到答题框。

[root@controller ~]# vi /etc/sysctl.conf

#添加写入

vm.dirty_expire_centisecs=6000

[root@controller ~]# sysctl –p

vm.dirty_expire_centisecs = 6000

1.2.6 Glance 调优[1 分]

在 OpenStack 平台中,修改相关配置文件,将子进程数量相应的配置修改成2。在 OpenStack 平台中,glance-api 处理请求的子进程数量默认是 0,只有一个主进程,请修改相关配置文件,将子进程数量相应的配置修改成 2,这样的话有一个主进程加 2 个子进程来并发处理请求。

[root@controller ~]# vi /etc/glance/glance-api.conf

#workers = <None>

改为

workers = 2

[root@controller ~]# systemctl restart openstack-glance-*

1.检查 glance 服务进程配置正确计 1 分

1.2.7 Ceph 部署[1 分]

使用提供的 ceph.tar.gz 软件包,安装 ceph 服务并完成初始化操作。使用提供的 ceph-14.2.22.tar.gz 软件包,在 OpenStack 平台上创建三台CentOS7.9 系统的云主机,使用这三个节点安装 ceph 服务并完成初始化操作,第一个节点为 mon/osd 节点,第二、三个节点为 osd 节点,部署完 ceph 后,创建 vms、images、volumes 三个 pool。

基础环境配置

| IP | 主机名 |

|---|---|

| 10.0.0.10 | storage01 |

| 10.0.0.11 | storage02 |

| 10.0.0.12 | storage03 |

关闭防火墙与selinux

- 所有节点

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config; setenforce 0; systemctl stop firewalld; systemctl disable firewalld

配置离线源

- storage01节点

tar xvf ceph.tar.gz -C /opt/

mkdir /etc/yum.repos.d/bak

mv /etc/yum.repos.d/CentOS-* /etc/yum.repos.d/bak/

cat >> /etc/yum.repos.d/local.repo << EOF

[ceph]

name=ceph

baseurl=file:///opt/ceph/

gpgcheck=0

EOF

yum clean all; yum makecache

yum install -y vsftpd

echo "anon_root=/opt" >> /etc/vsftpd/vsftpd.conf

systemctl enable --now vsftpd

- storage02/03节点

mkdir /etc/yum.repos.d/bak

mv /etc/yum.repos.d/CentOS-* /etc/yum.repos.d/bak/

cat >> /etc/yum.repos.d/local.repo << EOF

[ceph]

name=ceph

baseurl=ftp://10.0.0.10/ceph/

gpgcheck=0

EOF

yum clean all; yum makecache

配置hosts解析

- 所有节点

hostnamectl set-hostname storage01/02/03

cat >> /etc/hosts << EOF

10.0.0.10 storage01

10.0.0.11 storage02

10.0.0.12 storage03

EOF

配置免密

- storage01

ssh-copy-id -i /root/.ssh/id_rsa.pub root@storage01

ssh-copy-id -i /root/.ssh/id_rsa.pub root@storage02

ssh-copy-id -i /root/.ssh/id_rsa.pub root@storage03

安装部署ceph集群

安装服务

- storage01节点

yum install -y ceph-deploy

2配置集群

- storage01节点

mkdir ceph-cluster

cd ceph-cluster

#包差依赖,需要导入阿里云rpel源

wget -O /etc/yum.repos.d/epel.repo https://mirrors.aliyun.com/repo/epel-7.repo

yum install python2-pip.noarch -y

ceph-deploy new --cluster-network 10.0.0.0/24 --public-network 10.0.0.0/24 storage01

- 所有节点安装

yum install -y ceph ceph-radosgw

- storage01节点

ceph-deploy install --no-adjust-repos storage01 storage02 storage03

- 配置初始MON节点,并收集所有密钥(storage01节点)

# 不用配置主机,会根据配置文件完成

ceph-deploy mon create-initial

# 把配置文件和admin密钥拷贝Ceph集群各节点,以免得每次执行"ceph"命令时不得不明确指定MON节点地址和ceph.client.admin.keyring

ceph-deploy admin storage01 storage02 storage03

- 配置mgr

ceph-deploy mgr create storage02

- 所有节点安装

yum install ceph-common -y

添加osd

- storage01节点

- 向RADOS集群添加OSD

ceph-deploy disk list storage01 storage02 storage03

-

storage01节点

-

在管理节点上使用ceph-deploy命令擦除计划专用于OSD磁盘上的所有分区表和数据以便用于OSD,命令格式为"ceph-deploy disk zap {osd-server-name}{disk-name}",需要注意的是此步会清除目标设备上的所有数据。下面分别擦净机器用于OSD的一个磁盘设备sdb

ceph-deploy disk zap storage01 /dev/sdb

ceph-deploy disk zap storage02 /dev/sdb

ceph-deploy disk zap storage03 /dev/sdb

- storage01节点

ceph-deploy osd create storage01 --data /dev/sdb

ceph-deploy osd create storage02 --data /dev/sdb

ceph-deploy osd create storage03 --data /dev/sdb

扩展监视器节点

- storage01节点

ceph-deploy mon add storage02

ceph-deploy mon add storage03

扩展管理器节点

- storage01节点

ceph-deploy mgr create storage01

禁用不安全模式

- storage01节点

ceph config set mon auth_allow_insecure_global_id_reclaim false

1.检查 ceph 集群状态为 OK 正确计 0.5 分

2.检查 ceph 集群的 osd 正确计 0.5 分

1.2.8 Glance 对接 Ceph 存储[1 分]

修改 OpenStack 平台中 Glance 服务的配置文件,将 Glance 后端存储改为Ceph 存储。在自己搭建的 OpenStack 平台中修改 glance 服务的相关配置文件,将glance 后端存储改为 ceph 存储。也就是所以的镜像会上传至 ceph 的 images pool中。通过命令使用 cirros-0.3.4-x86_64-disk.img 镜像文件上传至云平台中,镜像命名为 cirros。

1.2.9 Cinder 对接 Ceph 存储[1 分]

修改 OpenStack 平台中 cinder服务的配置文件,将 cinder后端存储改为 Ceph存储。修改 OpenStack 平台中 cinder 服务的相关配置文件,将 cinder 后端存储改为 ceph 存储。使创建的 cinder 块存储卷在 ceph 的 volumes pool 中。配置完成后,在 OpenStack 平台中使用创建卷 cinder-volume1,大小为 1G。

1.检查 cinder 服务存储配置正确计 1 分

1.2.10 Nova 对接 Ceph 存储[1 分]

修改 OpenStack 平台中 Nova 服务的配置文件,将 Nova 后端存储改为 Ceph存储。修改 OpenStack 平台中 nova 服务的相关配置文件,将 nova 后端存储改为ceph 存储。使 nova 所创建的虚拟机都存储在 ceph 的 vms pool 中。配置完成后在 OpenStack 平台中使用命令创建云主机 server1。

1.检查 nova 服务存储配置正确计 0.5 分

2.检查云主机在 vms pool 中正确计 0.5 分

openstack T版对接ceph 14版本做glance、nova、cinder后端存储

| 节点 | IP |

|---|---|

| controller | 192.168.200.10 |

| compute | 192.168.200.20 |

| storage01 | 192.168.200.30 |

| storage2 | 192.168.200.31 |

| storage3 | 192.168.200.32 |

创建cinder、glance、nova存储池

[root@storage01 ceph-cluster]# ceph osd pool create volumes 8

pool 'volumes' created

[root@storage01 ceph-cluster]# ceph osd pool create images 8

pool 'images' created

[root@storage01 ceph-cluster]# ceph osd pool create vms 8

pool 'vms' created

[root@storage01 ceph-cluster]# ceph osd lspools

4 volumes

5 images

6 vms

创建用户以及密钥

[root@storage01 ceph-cluster]# ceph auth get-or-create client.cinder mon "allow r" osd "allow class-read object_prefix rbd_children,allow rwx pool=volumes,allow rwx pool=vms,allow rx pool=images"

[client.cinder]

key = AQDRE3tkDVGeFxAAPkGPKGlqh74pRl2dzIJXAw==

[root@storage01 ceph-cluster]# ceph auth get-or-create client.glance mon "allow r" osd "allow class-read object_prefix rbd_children,allow rwx pool=images"

[client.glance]

key = AQDaE3tkKTQCIRAAwRQ/VeIjL4G5GjmsJIbTwg==

[root@storage01 ceph-cluster]#

openstack节点创建ceph目录,T版默认创建了

[root@controller ~]# mkdir /etc/ceph/

[root@compute ~]# mkdir /etc/ceph/

ceph导出密钥glance和cinder,并导入到opesntack

[root@storage01 ceph-cluster]# ceph auth get client.glance -o ceph.client.glance.keyring

exported keyring for client.glance

[root@storage01 ceph-cluster]# ceph auth get client.cinder -o ceph.client.cinder.keyring

exported keyring for client.cinder

[root@storage01 ceph-cluster]#

[root@storage01 ceph-cluster]# scp ceph.client.glance.keyring root@192.168.200.10:/etc/ceph/

[root@storage01 ceph-cluster]# scp ceph.client.glance.keyring root@192.168.200.20:/etc/ceph/

[root@storage01 ceph-cluster]# scp ceph.client.cinder.keyring root@192.168.200.10:/etc/ceph/

[root@storage01 ceph-cluster]# scp ceph.client.cinder.keyring root@192.168.200.20:/etc/ceph/

拷贝认证文件到集群

[root@storage01 ceph-cluster]# scp ceph.conf root@192.168.200.20:/etc/ceph/

[root@storage01 ceph-cluster]# scp ceph.conf root@192.168.200.10:/etc/ceph/

计算节点添加libvirt密钥

[root@compute ~]# cd /etc/ceph/

[root@compute ceph]# UUID=$(uuidgen)

cat >> secret.xml << EOF

<secret ephemeral='no' private='no'>

<uuid>$UUID</uuid>

<usage type='ceph'>

<name>client.cinder secret</name>

</usage>

</secret>

EOF

[root@compute ceph]# virsh secret-define --file secret.xml

Secret 8ea0cbae-86a9-4c1c-9f03-fd4b144b8839 created

[root@compute ceph]# cat ceph.client.cinder.keyring

[client.cinder]

key = AQDRE3tkDVGeFxAAPkGPKGlqh74pRl2dzIJXAw==

caps mon = "allow r"

caps osd = "allow class-read object_prefix rbd_children,allow rwx pool=volumes,allow rwx pool=vms,allow rx pool=images"

[root@compute ceph]# virsh secret-set-value --secret ${UUID} --base64 $(cat ceph.client.cinder.keyring | grep key | awk -F ' ' '{print $3}')

Secret value set

[root@compute ceph]# virsh secret-list

UUID Usage

--------------------------------------------------------------------------------

8ea0cbae-86a9-4c1c-9f03-fd4b144b8839 ceph client.cinder secret

[root@compute ceph]#

安装客户端工具

[root@controller ~]# yum install -y ceph-common

[root@compute ~]# yum install -y ceph-common

对接glance后端存储

更改配置文件属性

[root@controller ~]# chown glance.glance /etc/ceph/ceph.client.glance.keyring

[root@controller ~]# vi /etc/glance/glance-api.conf

[glance_store]

#stores = file,http

#default_store = file

#filesystem_store_datadir = /var/lib/glance/images/

stores = rbd,file,http

default_store = rbd

rbd_store_pool = images

rbd_store_user = glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

重启服务

[root@controller ~]# systemctl restart openstack-glance*

测试

[root@controller ~]# openstack image create --disk-format qcow2 cirros-ceph --file /opt/iaas/images/cirros-0.3.4-x86_64-disk.img

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| checksum | ee1eca47dc88f4879d8a229cc70a07c6 |

| container_format | bare |

| created_at | 2023-06-03T14:36:37Z |

| disk_format | qcow2 |

| file | /v2/images/c015136d-f3e0-4150-abd2-f7fd3fd6dbd7/file |

| id | c015136d-f3e0-4150-abd2-f7fd3fd6dbd7 |

| min_disk | 0 |

| min_ram | 0 |

| name | cirros-ceph |

| owner | fb7a2a10b81f43fcbf4ccef895c56937

|

| properties | os_hash_algo='sha512', os_hash_value='1b03ca1bc3fafe448b90583c12f367949f8b0e665685979d95b004e48574b953316799e23240f4f739d1b5eb4c4ca24d38fdc6f4f9d8247a2bc64db25d6bbdb2', os_hidden='False' |

| protected | False

|

| schema | /v2/schemas/image

|

| size | 13287936

|

| status | active

|

| tags |

|

| updated_at | 2023-06-03T14:36:42Z

|

| virtual_size | None

|

| visibility | shared

|

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

[root@controller ~]#

ceph节点查看

[root@storage01 ceph]# rbd ls images

c015136d-f3e0-4150-abd2-f7fd3fd6dbd7

与openstack创建镜像id对应一致

对接nova后端存储

修改计算节点配置文件

[root@compute ceph]# vi /etc/nova/nova.conf

[DEFAULT]

.....

live_migration_flag = "VIR_MIGRATE_UNDEFINE_SOURCE,VIR_MIGRATE_PEER2PEER,VIR_MIGRATE_LIVE"

[libvirt]

images_type = rbd

images_rbd_pool = vms

images_rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_user = cinder

rbd_secret_uuid = 8ea0cbae-86a9-4c1c-9f03-fd4b144b8839

重启服务

[root@compute ceph]# systemctl restart openstack-nova-compute.service

测试

[root@controller ~]# openstack server list

+--------------------------------------+------+--------+--------------------+-------------+---------+

| ID | Name | Status | Networks | Image | Flavor |

+--------------------------------------+------+--------+--------------------+-------------+---------+

| d999b371-9e98-4cdb-98b8-407e900c631c | test | ACTIVE | int-net=10.0.0.206 | cirros-ceph | m1.tiny |

+--------------------------------------+------+--------+--------------------+-------------+---------+

[root@storage01 ceph]# rbd ls vms

d999b371-9e98-4cdb-98b8-407e900c631c_disk

对接cinder后端存储

修改文件属性,集群节点

[root@controller ~]# chown cinder.cinder /etc/ceph/ceph.client.cinder.keyring

[root@compute ~]# chown cinder.cinder /etc/ceph/ceph.client.cinder.keyring

修改配置文件,控制节点

[root@controller ~]# vi /etc/cinder/cinder.conf

[DEFAULT]

.....

default_volume_type = ceph

重启服务

[root@controller ~]# systemctl restart *cinder*

修改文件,计算节点

[root@compute ceph]# vi /etc/cinder/cinder.conf

[DEFAULT]

.....

enabled_backends = ceph,lvm

[ceph]

volume_driver = cinder.volume.drivers.rbd.RBDDriver

rbd_pool = volumes

rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_flatten_volume_from_snapshot = false

rbd_max_clone_depth = 5

rbd_store_chunk_size = 4

rados_connect_timeout = -1

glance_api_version = 2

rbd_user = cinder

rbd_secret_uuid = 8ea0cbae-86a9-4c1c-9f03-fd4b144b8839

volume_backend_name = ceph

重启服务

[root@compute ceph]# systemctl restart openstack-cinder-volume.service

测试

[root@controller ~]# openstack volume type create ceph

+-------------+--------------------------------------+

| Field | Value |

+-------------+--------------------------------------+

| description | None |

| id | c357956b-2521-439d-af59-ae2e9cc5c5aa |

| is_public | True |

| name | ceph |

+-------------+--------------------------------------+

[root@controller ~]# cinder --os-username admin --os-tenant-name admin type-key ceph set volume_backend_name=ceph

WARNING:cinderclient.shell:API version 3.59 requested,

WARNING:cinderclient.shell:downgrading to 3.50 based on server support.

[root@controller ~]# openstack volume create ceph-test --type ceph --size 1

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| attachments | [] |

| availability_zone | nova |

| bootable | false |

| consistencygroup_id | None |

| created_at | 2023-06-03T15:36:09.000000 |

| description | None |

| encrypted | False |

| id | fdf6c10a-31dc-4245-ae7b-b7bc0e24477d |

| migration_status | None |

| multiattach | False |

| name | ceph-test |

| properties | |

| replication_status | None |

| size | 1 |

| snapshot_id | None |

| source_volid | None |

| status | creating |

| type | ceph |

| updated_at | None |

| user_id | 9ff19a0bedd5461aa3fff4a80f10054c |

+---------------------+--------------------------------------+

[root@controller ~]#

[root@storage01 ceph]# rbd ls volumes

volume-fdf6c10a-31dc-4245-ae7b-b7bc0e24477d

卷id相同,验证成功

1.检查 glance 服务存储配置正确计 1 分

1.2.11 私有云平台优化:系统网络优化[1 分]

使用自行创建的 OpenStack 云计算平台,配置控制节点的网络缓存,使得每个 UDP 连接(发送、受)保证能有最小 358 KiB 最大 460 KiB 的 buffer,重启后仍然生效。

[root@controller ~]# cat /etc/sysctl.conf

# sysctl settings are defined through files in

# /usr/lib/sysctl.d/, /run/sysctl.d/, and /etc/sysctl.d/.

#

# Vendors settings live in /usr/lib/sysctl.d/.

# To override a whole file, create a new file with the same in

# /etc/sysctl.d/ and put new settings there. To override

# only specific settings, add a file with a lexically later

# name in /etc/sysctl.d/ and put new settings there.

#

# For more information, see sysctl.conf(5) and sysctl.d(5).

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.core.rmem_default = 358400

net.core.rmem_max = 460800

net.core.wmem_default = 358400

net.core.wmem_max = 460800

[root@controller ~]# sysctl -p

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.core.rmem_default = 358400

net.core.rmem_max = 460800

net.core.wmem_default = 358400

net.core.wmem_max = 460800

1.检查网络缓存的系统配置正确计 1 分

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

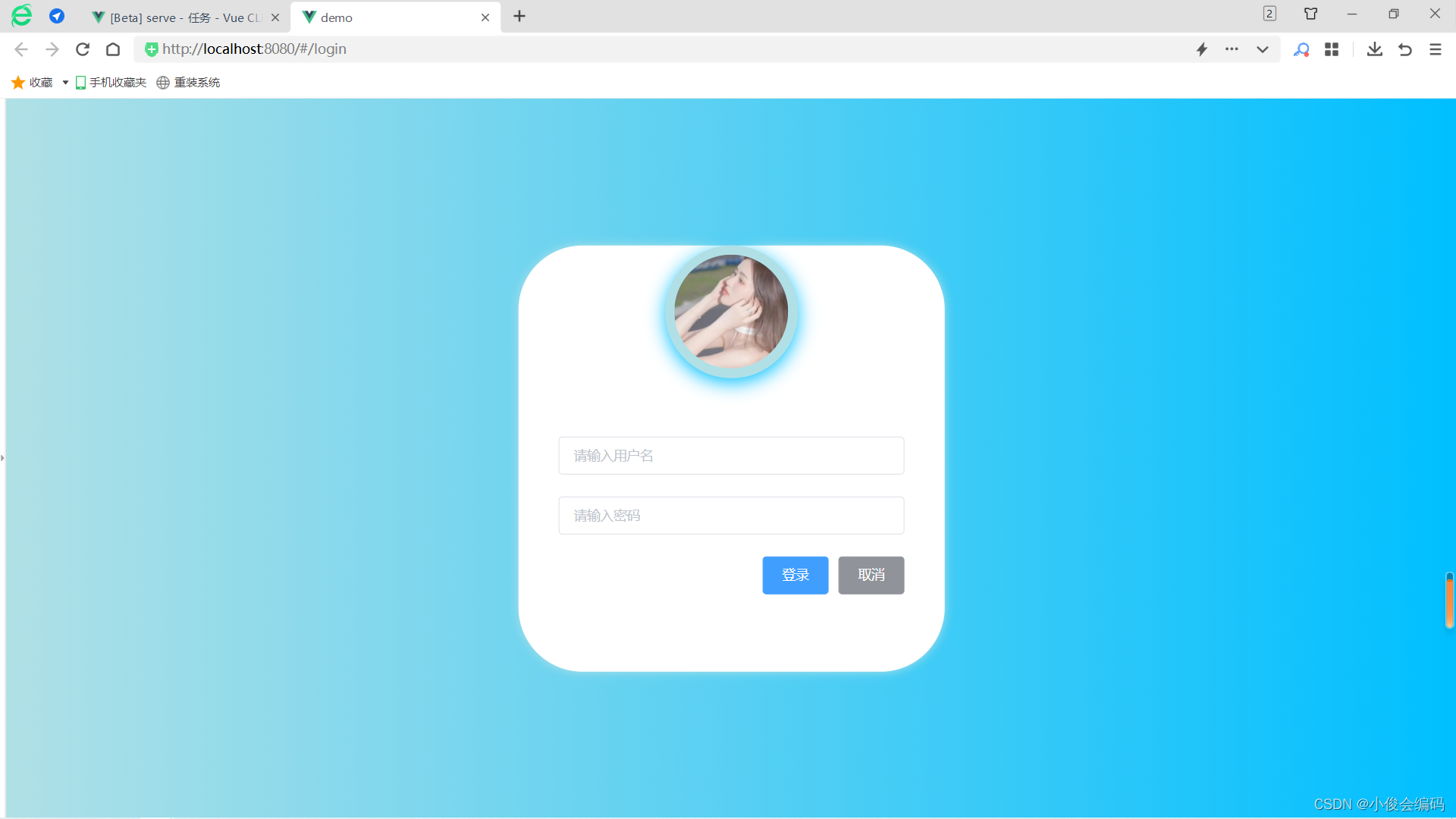

所有评论(0)